Redis强大的备份同步工具Redis-Shake

redis-shake是阿里云Redis&MongoDB团队开源的用于redis数据同步的工具。下载地址:这里。

基本功能

redis-shake是我们基于redis-port基础上进行改进的一款产品。它支持解析、恢复、备份、同步四个功能。以下主要介绍同步sync。

- 恢复restore:将RDB文件恢复到目的redis数据库。

- 备份dump:将源redis的全量数据通过RDB文件备份起来。

- 解析decode:对RDB文件进行读取,并以json格式解析存储。

- 同步sync:支持源redis和目的redis的数据同步,支持全量和增量数据的迁移,支持从云下到阿里云云上的同步,也支持云下到云下不同环境的同步,支持单节点、主从版、集群版之间的互相同步。需要注意的是,如果源端是集群版,可以启动一个RedisShake,从不同的db结点进行拉取,同时源端不能开启move slot功能;对于目的端,如果是集群版,写入可以是1个或者多个db结点。

- 同步rump:支持源redis和目的redis的数据同步,仅支持全量的迁移。采用scan和restore命令进行迁移,支持不同云厂商不同redis版本的迁移。

- 指定Key恢复:filter.key.whitelist可以过滤指定前辍的key,但是只适用于

restore,syncandrump三种模式。

基本原理

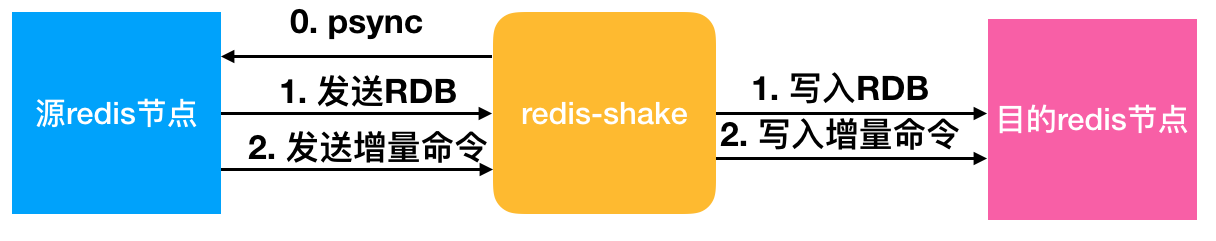

redis-shake的基本原理就是模拟一个从节点加入源redis集群,首先进行全量拉取并回放,然后进行增量的拉取(通过psync命令)。如下图所示:

如果源端是集群模式,只需要启动一个redis-shake进行拉取,同时不能开启源端的move slot操作。如果目的端是集群模式,可以写入到一个结点,然后再进行slot的迁移,当然也可以多对多写入。

目前,redis-shake到目的端采用单链路实现,对于正常情况下,这不会成为瓶颈,但对于极端情况,qps比较大的时候,此部分性能可能成为瓶颈,后续我们可能会计划对此进行优化。另外,redis-shake到目的端的数据同步采用异步的方式,读写分离在2个线程操作,降低因为网络时延带来的同步性能下降。

高效性

全量同步阶段并发执行,增量同步阶段异步执行,能够达到毫秒级别延迟(取决于网络延迟)。同时,我们还对大key同步进行分批拉取,优化同步性能。

监控

用户可以通过我们提供的restful拉取metric来对redis-shake进行实时监控:curl 127.0.0.1:9320/metric。

校验

如何校验同步的正确性?可以采用我们开源的redis-full-check,具体原理可以参考这篇博客。

支持

支持2.8-5.0版本的同步。

支持codis。

支持云下到云上,云上到云上,云上到云下(阿里云目前支持主从版),其他云到阿里云等链路,帮助用户灵活构建混合云场景。

开发中的功能

断点续传。支持断开后按offset恢复,降低因主备切换、网络抖动造成链路断开重新同步拉取全量的性能影响。

说明

多活支持的支持,需要依赖内核的能力,目前单单依赖通道层面无法解决该问题。redis内核层面需要提供gtid的概念,以及CRDT的解决冲突的方式才可以。

参数解释:

启动示例(以sync举例):

vinllen@ ~/redis-shake/bin$ ./redis-shake -type=sync -conf=../conf/redis-shake.conf

conf路径下的redis-shake.conf存放的是配置文件,其内容及部分中文注释如下

# this is the configuration of redis-shake.

# if you have any problem, please visit https://github.com/alibaba/RedisShake/wiki/FAQ

# current configuration version, do not modify.

# 当前配置文件的版本号,请不要修改该值。

conf.version = 1

# ------------------------------------------------------

# id

id = redis-shake

# log file,日志文件,不配置将打印到stdout (e.g. /var/log/redis-shake.log )

log.file =

# log level: "none", "error", "warn", "info", "debug". default is "info".

log.level = info

# pid path,进程文件存储地址(e.g. /var/run/),不配置将默认输出到执行下面,

# 注意这个是目录,真正的pid是`{pid_path}/{id}.pid`

pid_path =

# pprof port.

system_profile = 9310

# restful port, set -1 means disable, in `restore` mode RedisShake will exit once finish restoring RDB only if this value

# is -1, otherwise, it'll wait forever.

# restful port,查看metric端口, -1表示不启用,如果是`restore`模式,只有设置为-1才会在完成RDB恢复后退出,否则会一直block。

http_profile = 9320

# parallel routines number used in RDB file syncing. default is 64.

# 启动多少个并发线程同步一个RDB文件。

parallel = 32

# source redis configuration.

# used in `dump`, `sync` and `rump`.

# source redis type, e.g. "standalone" (default), "sentinel" or "cluster".

# 1. "standalone": standalone db mode.

# 2. "sentinel": the redis address is read from sentinel.

# 3. "cluster": the source redis has several db.

# 4. "proxy": the proxy address, currently, only used in "rump" mode.

# 源端redis的类型,支持standalone,sentinel,cluster和proxy四种模式,注意:目前proxy只用于rump模式。

source.type = standalone

# ip:port

# the source address can be the following:

# 1. single db address. for "standalone" type.

# 2. ${sentinel_master_name}:${master or slave}@sentinel single/cluster address, e.g., mymaster:master@127.0.0.1:26379;127.0.0.1:26380, or @127.0.0.1:26379;127.0.0.1:26380. for "sentinel" type.

# 3. cluster that has several db nodes split by semicolon(;). for "cluster" type. e.g., 10.1.1.1:20331;10.1.1.2:20441.

# 4. proxy address(used in "rump" mode only). for "proxy" type.

# 源redis地址。对于sentinel或者开源cluster模式,输入格式为"master名字:拉取角色为master或者slave@sentinel的地址",别的cluster

# 架构,比如codis, twemproxy, aliyun proxy等需要配置所有master或者slave的db地址。

source.address = 127.0.0.1:20441

# password of db/proxy. even if type is sentinel.

source.password_raw = 123456

# auth type, don't modify it

source.auth_type = auth

# tls enable, true or false. Currently, only support standalone.

# open source redis does NOT support tls so far, but some cloud versions do.

source.tls_enable = false

# input RDB file.

# used in `decode` and `restore`.

# if the input is list split by semicolon(;), redis-shake will restore the list one by one.

# 如果是decode或者restore,这个参数表示读取的rdb文件。支持输入列表,例如:rdb.0;rdb.1;rdb.2

# redis-shake将会挨个进行恢复。

source.rdb.input = local

# the concurrence of RDB syncing, default is len(source.address) or len(source.rdb.input).

# used in `dump`, `sync` and `restore`. 0 means default.

# This is useless when source.type isn't cluster or only input is only one RDB.

# 拉取的并发度,如果是`dump`或者`sync`,默认是source.address中db的个数,`restore`模式默认len(source.rdb.input)。

# 假如db节点/输入的rdb有5个,但rdb.parallel=3,那么一次只会

# 并发拉取3个db的全量数据,直到某个db的rdb拉取完毕并进入增量,才会拉取第4个db节点的rdb,

# 以此类推,最后会有len(source.address)或者len(rdb.input)个增量线程同时存在。

source.rdb.parallel = 0

# for special cloud vendor: ucloud

# used in `decode` and `restore`.

# ucloud集群版的rdb文件添加了slot前缀,进行特判剥离: ucloud_cluster。

source.rdb.special_cloud =

# target redis configuration. used in `restore`, `sync` and `rump`.

# the type of target redis can be "standalone", "proxy" or "cluster".

# 1. "standalone": standalone db mode.

# 2. "sentinel": the redis address is read from sentinel.

# 3. "cluster": open source cluster (not supported currently).

# 4. "proxy": proxy layer ahead redis. Data will be inserted in a round-robin way if more than 1 proxy given.

# 目的redis的类型,支持standalone,sentinel,cluster和proxy四种模式。

target.type = standalone

# ip:port

# the target address can be the following:

# 1. single db address. for "standalone" type.

# 2. ${sentinel_master_name}:${master or slave}@sentinel single/cluster address, e.g., mymaster:master@127.0.0.1:26379;127.0.0.1:26380, or @127.0.0.1:26379;127.0.0.1:26380. for "sentinel" type.

# 3. cluster that has several db nodes split by semicolon(;). for "cluster" type.

# 4. proxy address. for "proxy" type.

target.address = 127.0.0.1:20551

# password of db/proxy. even if type is sentinel.

target.password_raw =

# auth type, don't modify it

target.auth_type = auth

# all the data will be written into this db. < 0 means disable.

target.db = -1

# tls enable, true or false. Currently, only support standalone.

# open source redis does NOT support tls so far, but some cloud versions do.

target.tls_enable = false

# output RDB file prefix.

# used in `decode` and `dump`.

# 如果是decode或者dump,这个参数表示输出的rdb前缀,比如输入有3个db,那么dump分别是:

# ${output_rdb}.0, ${output_rdb}.1, ${output_rdb}.2

target.rdb.output = local_dump

# some redis proxy like twemproxy doesn't support to fetch version, so please set it here.

# e.g., target.version = 4.0

target.version =

# use for expire key, set the time gap when source and target timestamp are not the same.

# 用于处理过期的键值,当迁移两端不一致的时候,目的端需要加上这个值

fake_time =

# how to solve when destination restore has the same key.

# rewrite: overwrite.

# none: panic directly.

# ignore: skip this key. not used in rump mode.

# used in `restore`, `sync` and `rump`.

# 当源目的有重复key,是否进行覆写

# rewrite表示源端覆盖目的端。

# none表示一旦发生进程直接退出。

# ignore表示保留目的端key,忽略源端的同步key。该值在rump模式下没有用。

key_exists = none

# filter db, key, slot, lua.

# filter db.

# used in `restore`, `sync` and `rump`.

# e.g., "0;5;10" means match db0, db5 and db10.

# at most one of `filter.db.whitelist` and `filter.db.blacklist` parameters can be given.

# if the filter.db.whitelist is not empty, the given db list will be passed while others filtered.

# if the filter.db.blacklist is not empty, the given db list will be filtered while others passed.

# all dbs will be passed if no condition given.

# 指定的db被通过,比如0;5;10将会使db0, db5, db10通过, 其他的被过滤

filter.db.whitelist =

# 指定的db被过滤,比如0;5;10将会使db0, db5, db10过滤,其他的被通过

filter.db.blacklist =

# filter key with prefix string. multiple keys are separated by ';'.

# e.g., "abc;bzz" match let "abc", "abc1", "abcxxx", "bzz" and "bzzwww".

# used in `restore`, `sync` and `rump`.

# at most one of `filter.key.whitelist` and `filter.key.blacklist` parameters can be given.

# if the filter.key.whitelist is not empty, the given keys will be passed while others filtered.

# if the filter.key.blacklist is not empty, the given keys will be filtered while others passed.

# all the namespace will be passed if no condition given.

# 支持按前缀过滤key,只让指定前缀的key通过,分号分隔。比如指定abc,将会通过abc, abc1, abcxxx

filter.key.whitelist =

# 支持按前缀过滤key,不让指定前缀的key通过,分号分隔。比如指定abc,将会阻塞abc, abc1, abcxxx

filter.key.blacklist =

# filter given slot, multiple slots are separated by ';'.

# e.g., 1;2;3

# used in `sync`.

# 指定过滤slot,只让指定的slot通过

filter.slot =

# filter lua script. true means not pass. However, in redis 5.0, the lua

# converts to transaction(multi+{commands}+exec) which will be passed.

# 控制不让lua脚本通过,true表示不通过

filter.lua = false

# big key threshold, the default is 500 * 1024 * 1024 bytes. If the value is bigger than

# this given value, all the field will be spilt and write into the target in order. If

# the target Redis type is Codis, this should be set to 1, please checkout FAQ to find

# the reason.

# 正常key如果不大,那么都是直接调用restore写入到目的端,如果key对应的value字节超过了给定

# 的值,那么会分批依次一个一个写入。如果目的端是Codis,这个需要置为1,具体原因请查看FAQ。

# 如果目的端大版本小于源端,也建议设置为1。

big_key_threshold = 524288000

# enable metric

# used in `sync`.

# 是否启用metric

metric = true

# print in log

# 是否将metric打印到log中

metric.print_log = false

# sender information.

# sender flush buffer size of byte.

# used in `sync`.

# 发送缓存的字节长度,超过这个阈值将会强行刷缓存发送

sender.size = 104857600

# sender flush buffer size of oplog number.

# used in `sync`. flush sender buffer when bigger than this threshold.

# 发送缓存的报文个数,超过这个阈值将会强行刷缓存发送,对于目的端是cluster的情况,这个值

# 的调大将会占用部分内存。

sender.count = 4095

# delay channel size. once one oplog is sent to target redis, the oplog id and timestamp will also

# stored in this delay queue. this timestamp will be used to calculate the time delay when receiving

# ack from target redis.

# used in `sync`.

# 用于metric统计时延的队列

sender.delay_channel_size = 65535

# enable keep_alive option in TCP when connecting redis.

# the unit is second.

# 0 means disable.

# TCP keep-alive保活参数,单位秒,0表示不启用。

keep_alive = 0

# used in `rump`.

# number of keys captured each time. default is 100.

# 每次scan的个数,不配置则默认100.

scan.key_number = 50

# used in `rump`.

# we support some special redis types that don't use default `scan` command like alibaba cloud and tencent cloud.

# 有些版本具有特殊的格式,与普通的scan命令有所不同,我们进行了特殊的适配。目前支持腾讯云的集群版"tencent_cluster"

# 和阿里云的集群版"aliyun_cluster",注释主从版不需要配置,只针对集群版。

scan.special_cloud =

# used in `rump`.

# we support to fetching data from given file which marks the key list.

# 有些云版本,既不支持sync/psync,也不支持scan,我们支持从文件中进行读取所有key列表并进行抓取:一行一个key。

scan.key_file =

# limit the rate of transmission. Only used in `rump` currently.

# e.g., qps = 1000 means pass 1000 keys per second. default is 500,000(0 means default)

qps = 200000

# enable resume from break point, please visit xxx to see more details.

# 断点续传开关

resume_from_break_point = false

# ----------------splitter----------------

# below variables are useless for current open source version so don't set.

# replace hash tag.

# used in `sync`.

replace_hash_tag = false

开源地址

redis-shake: https://github.com/aliyun/redis-shake